Breaking News: Meta’s Adaptive Ranking Model Redefines Real-Time Ad Serving at LLM Scale

Meta has launched a groundbreaking Adaptive Ranking Model that solves the industry’s “inference trilemma” – balancing massive model complexity with sub-second latency – for its ads recommendation systems. The system, already live on Instagram since Q4 2025, delivers a stunning +3% increase in ad conversions and +5% increase in click-through rate for targeted users, the company announced today.

“This is not just an incremental improvement; it fundamentally bends the inference scaling curve,” said a Meta engineering lead speaking on condition of anonymity. “We can now serve LLM-scale models for every ad request without sacrificing the speed or cost-efficiency that a global platform serving billions requires.”

Inverted Pyramid: The Core Announcement

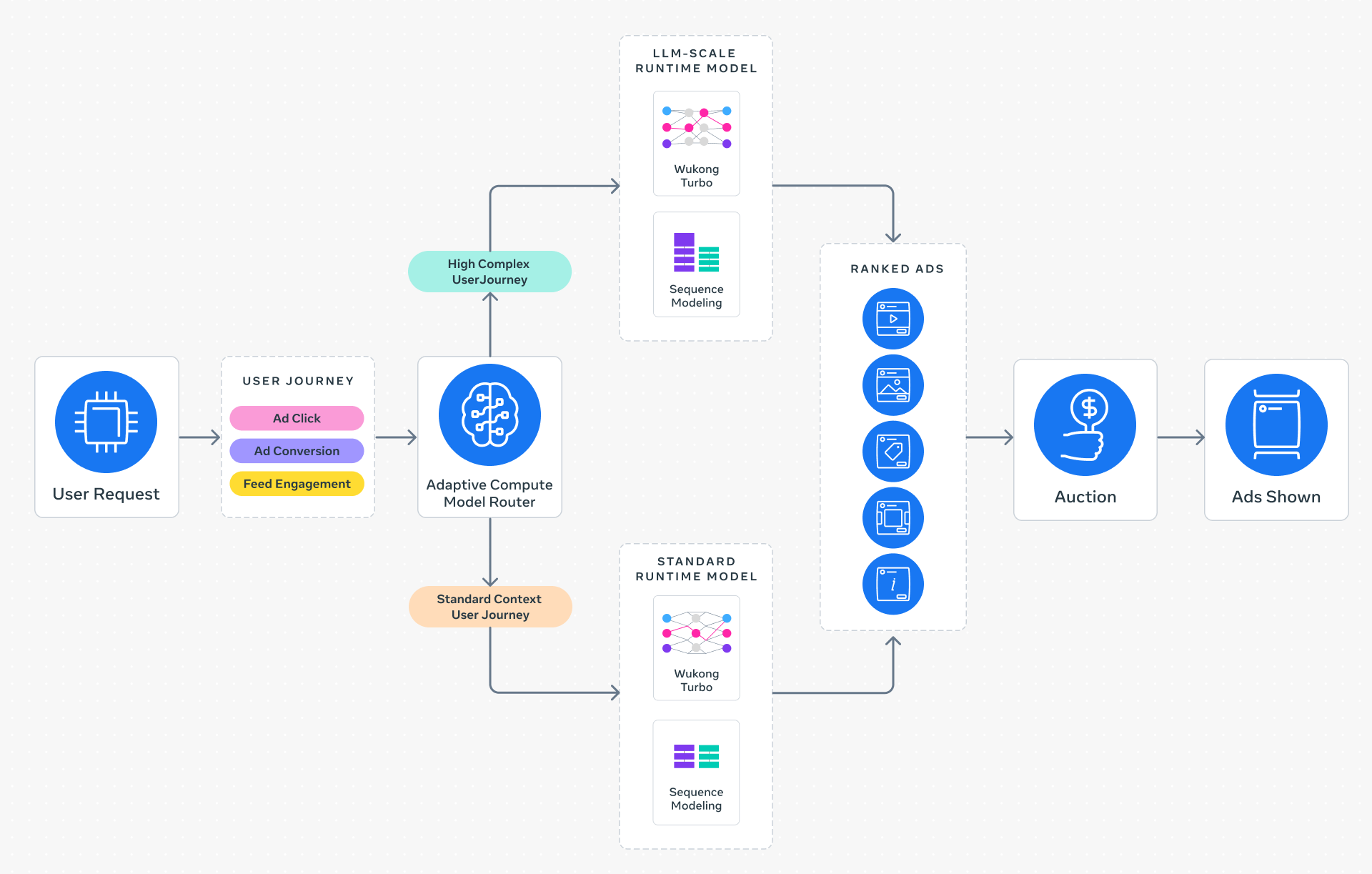

At the heart of the announcement is a shift from a “one-size-fits-all” inference approach to intelligent request routing. The Adaptive Ranking Model dynamically aligns model complexity with each user’s context and intent, ensuring every ad request is processed by the most effective yet efficient model available.

This allows Meta to maintain strict sub-second latency while deploying models with up to 1 trillion parameters, a scale previously considered impossible for real-time advertising systems. The company is now offering this technology to all advertisers through its existing Ads Manager platform.

Background: The Inference Trilemma

For years, the ads industry has struggled with a fundamental tension: larger, more complex models (like large language models) provide deeper understanding of user intent, but they require immense compute and memory – making them too slow and expensive for real-time bidding environments. Meta calls this the “inference trilemma.”

Traditional approaches forced a trade-off: either use simpler models for speed, or accept latency spikes for accuracy. Meta’s prior recommendation systems already scaled to billions of users, but reaching LLM-scale required a complete architectural rethink.

The company’s solution rests on three innovative pillars:

- Inference-Efficient Model Scaling: A request-centric architecture that serves LLM-scale models under sub-second latency by focusing compute only where needed.

- Model/System Co-Design: Hardware-aware model architectures that align with the capabilities and limitations of Meta’s heterogeneous server fleet.

- Reimagined Serving Infrastructure: Multi-card deployments and hardware-specific optimizations that unlock O(1T) parameter scaling with unprecedented efficiency.

What This Means for Advertisers & Users

For advertisers, the Adaptive Ranking Model translates directly to higher return on ad spend. The 5% CTR lift and 3% conversion increase – already validated on Instagram – show that LLM-scale intelligence can be deployed without extra latency or cost. “Small and medium businesses will benefit most because they get the same powerful targeting as large enterprises,” said a Meta product manager in a press briefing.

For users, the experience becomes more relevant without any slowdown. The system’s ability to understand subtle intent – such as distinguishing between someone browsing for inspiration vs. ready to purchase – means ads feel less intrusive and more helpful. Importantly, Meta claims this is achieved while maintaining privacy standards, as the system runs entirely on aggregated, anonymized signals.

Industry analysts are already calling this a potential inflection point. “If Meta can sustain these gains at global scale, it will force every competitor to rethink their ad infrastructure,” commented Dr. Elena Voss, a RecSys researcher at Stanford. “The inference trilemma was the biggest barrier to next-gen ads – Meta just blew it up.”

Technical Highlights

The Adaptive Ranking Model replaces static model-size selection with real-time intelligence. For example, a user scrolling through Instagram during a busy commute might receive a lightweight model, while a user actively searching for products gets the full LLM-scale treatment – all in the same fraction of a second.

Meta confirmed that the system is already deployed across all Instagram ad placements and will expand to Facebook and other surfaces in early 2026. The company plans to open-source parts of the co-design framework to accelerate industry adoption.

Conclusion: A New Benchmark in Real-Time AI

By bending the inference scaling curve, Meta has not only solved its own trilemma but set a new benchmark for real-time, LLM-scale serving. The adaptive approach could inspire similar breakthroughs in other latency-sensitive applications – from autonomous driving to financial trading. For now, advertisers have a powerful new lever, and users get ads that truly understand them.

“This is the start of the era where LLM complexity meets real-time demands. Meta just showed it’s possible,” concluded the engineering lead.